Accepted Demos

Welcome to the 6G Summit, Abu Dhabi, UAE

16-17 November 2023

Venue : ROSEWOOD Hotel Abu Dhabi

16-17 November 2023

Venue : ROSEWOOD Hotel Abu Dhabi

Nassim Sehad, Department of Information and Communications Engineering, School of Electrical Engineering, Aalto University, Finland. Email: nassim.sehad@aalto.fi

Xinyi Tu, Department of Mechanical Engineering, School of Engineering, Aalto University, Finland. Email: xinyi.tu@aalto.fi

Akash Rajasekaran, Department of Information and Communications Engineering, School of Electrical Engineering, Aalto University, Finland. Email: akash.rajasekaran@aalto.fi

Hamed Hellaoui, Department of Information and Communications Engineering, School of Electrical Engineering, Aalto University, Finland. Email: hamed.hellaoui@aalto.fi

Riku Jantti, Department of Information and Communications Engineering, School of Electrical Engineering, Aalto University, Finland. Email: riku.jantti@aalto.fi

Merouane Debbah, Khalifa University, Abu Dhabi, UAE. Email: merouane.debbah@ku.ac.ae

This demo addresses the challenging problem of enabling reliable immersive teleoperation in scenarios where an Unmanned Aerial Vehicle (UAV) is remotely controlled by an operator via a cellular network. We propose a novel architecture leveraging Digital Twin (DT) technology to mitigate potential risks and enhance teleoperation. The proposed demonstration includes:

This demo addresses the challenging problem of enabling reliable immersive teleoperation in scenarios where an Unmanned Aerial Vehicle (UAV) is remotely controlled by an operator via a cellular network. We propose a novel architecture leveraging Digital Twin (DT) technology to mitigate potential risks and enhance teleoperation. The proposed demonstration includes:

1) A unique architecture for DT-enabled UAV immersive teleoperation in a VR environment, incorporating advanced 3D surroundings, weather, and network constraints.

2) A virtual UAV augmented with advanced features that are not present in the real UAV to improve teleoperation reliability.

3) An intelligent decision-making logic that utilizes information from both virtual and real environments to approve, deny, or modify actions initiated by the UAV operator, reducing potential risks.

4) The implementation of this architecture and its validation through a series of field trials showcasing its practical applicability.

The demo will include a real UAV, a virtual UAV (Digital Twin), a VR user, and an edge server. The UAV will be located at the UAV flying arena in TII and remotely controlled, interacting with obstacles to demonstrate the effectiveness of the proposed DT and decision-making algorithm in a real-world setting.

This effort contributes significantly to the current understanding and potential developments of DT technology and its benefits for remote UAV operation, thereby extending the scope of teleoperation capabilities.

Liao Jingyi, Department of Information and Communications Engineering, Aalto University, Finland. Email: jingyi.liao@aalto.fi

Kalle Koskinen, Department of Information and Communications Engineering, Aalto University, Finland. Email: kalle.koskinen@aalto.fi

Xie Boxuan, Department of Information and Communications Engineering, Aalto University, Finland. Email: boxuan.xie@aalto.fi

Kalle Ruttik, Department of Information and Communications Engineering, Aalto University, Finland. Email: kalle.ruttik@aalto.fi

Riku Jäntti, Department of Information and Communications Engineering, Aalto University, Finland. Email: riku.jantti@aalto.fi

Dinthuy Phanhuy, Orange Innovation, Chantillon, France. Email: dinthuy.phanhuy@orange.com

We demonstrate the integration of an energy autonomous Ambient Internet of Things (AIoT) device into the cellular downlink. Our proposed system uses the LTE cell-specific reference signal to illuminate the backscatter device and user equipment as the receiver. This demo will be conducted over cables using a recorded signal from a real base station as the signal source, and a software-defined radio implementation of a UE channel estimator.

The essence of the demonstration is to show utilization of IoT technology while addressing the issue of dwindling natural energy, e-waste and high cost of labour in changing battery in cellular IoT technologies.

This demonstration hopes to contribute to the studies and development of new massive Machine Type Communications (mMTC) solutions for mobile networks for devices that require less power than the existing 3GPP low-power, wide area connectivity solutions. Furthermore, it shows backscatter communications as one of the key technology enablers AIoT.

Abdelfatah Ali, Department of Electrical Engineering, South Valley University, Egypt & American University of Sharjah, UAE. Email: abdelfatahm@aus.edu

Mostafa F. Shaaban, Akmal Abdelfatah, Department of Electrical Engineering, American University of Sharjah, UAE. Emails: mshaaban@aus.edu , akmal@aus.edu

Maher A. Azzouz and Ahmed S. A. Awad, Department of Electrical and Computer Engineering, University of Windsor, Canada. Emails: mazzouz@uwindsor.ca , aawad@uwindsor.ca

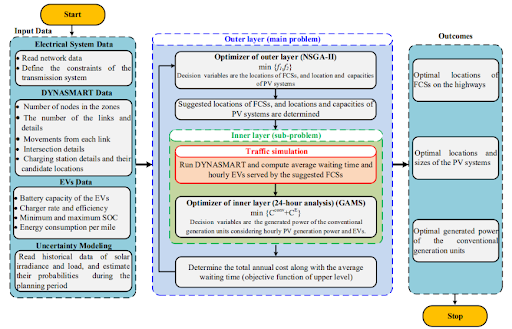

As the Transportation electrification and PVs have become vital components of modern and future planning problems. Therefore, the large-scale deployment of EVs requires reliable fast-charging stations (FCSs). However, random allocation of FCSs and PV adversely impacts the performance and operation of the traffic flow and power networks. Thus, this work proposes a new planning approach for the optimal allocation of FCSs and PVs in large-scale smart grids and transportation networks. Unlike existing approaches, the well-known Dynamic Network Assignment Simulation for Road Telematics (DYNASMART) is updated and employed in the proposed planning approach to accurately simulate the EVs within the traffic stream. The average waiting time of the EVs at the charging stations (including charging time) and the total annual costs are utilized as conflicting objective functions to be minimized. To effectively solve this comprehensive model with conflicting objectives, a bi-layer multiobjective optimization based on metaheuristic (NSGA-II) and deterministic (GAMS) algorithms is established. The outer layer optimizes the allocation of FCSs and PV accurately, while the inner one optimally dispatches the generated power of the conventional generation units. The proposed planning approach is tested on a 26-bus electrical transmission network and 92-node transportation system. The simulation results demonstrate the efficiency of the proposed approach. The optimal allocation of FCSs and PVs with 80% EVs penetration increases the net profit by 85.36% while maintaining a maximum of 30 minutes for both charging and waiting time.

Daniel K. Tettey, Research and Development, Ford Otosan, Istanbul, Turkey. Email: dtettey@ford.com.tr

Mohammed Elamassie, Electrical/Electronic Engineering, Ozyegin University, Istanbul, Turkey. Email: mohammed.elamassie@ozyegin.edu.tr

Murat Uysal, Engineering Division, New York University Abu Dhabi, Abu Dhabi, UAE. Email: murat.uysal@nyu.edu

One of the key challenges associated with VLC is the bandwidth limitation associated with commercial white LEDs, which behave as first-order low-pass filters. Multicarrier modulation schemes such as orthogonal frequency division multiplexing (OFDM) are commonly employed to overcome the distortion imposed by bandwidth limitations. Furthermore, the optical wireless channel varies with time due to device or user mobility in addition to environmental conditions, and this can impair the performance of the LiFi system. The adaptive transmission allows the switching between different modulation sizes and is critical to satisfy the system performance requirements in terms of bit error rate, spectral efficiency and power efficiency.

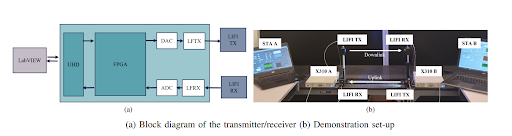

This demo presents an adaptive LiFi system on a software-defined platform with the capability of switching between different modulations based on the channel state information. For this purpose, modified Ettus USRP x310 platforms were used where the RF daughterboards are replaced by LFTX/LFRX cards. For VLC transmitter and receiver front-ends, Hyperion Technologies LiFi R&D kit is used. The physical layer of the demo system builds upon direct current biased optical OFDM (DCO-OFDM) and supports adaptive transmission with different modulation schemes/levels including BPSK, 4-QAM, 8-PSK, 16-QAM and 64-QAM. The feedback link employs a low-rate on-off keying (OOK) transmission scheme to send channel state information (in terms of SNR) to the OFDM transmitter. Consequently, the transmitter selects a modulation scheme that maximizes the spectral efficiency for the channel while satisfying a predetermined error performance requirement.

Mizan Abraha Gebremicheal and Ibrahim (Abe) M. Elfadel, Khalifa University, Abu Dhabi, UAE. Emails: mizan.gebremichael@ku.ac.ae , ibrahim.elfadel@ku.ac.ae

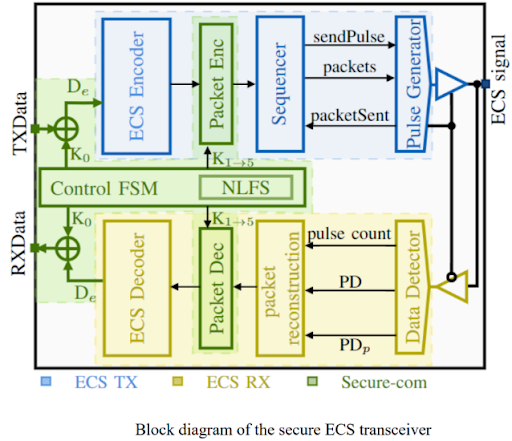

The ECS protocol enables single-wire signaling in IoT devices and sensors without the need for clock and data recovery. However, using conventional symmetric block cipher methods to secure ECS can reduce its data rate and negate its low power consumption and small footprint advantages. HSA5/1, a high-speed version of the A5/1 stream cipher, offers excellent security while retaining most ECS protocol benefits, but it comes with a significant data rate overhead. To address this issue, we present a modified HSA5/1 cipher that utilizes the nature of the ECS header packets to develop an encryption method that has minimal impact on the effective data rate of secure transmission. Additionally, we have implemented an extra security layer to further enhance packet privacy without affecting the data rate. The proposed secure ECS transceiver, Fig. 1, has been prototyped using FPGA. This novel design offers notable improvements over the prior implementation, including increased data rates of 5.6%, 16%, and 150% for minimum, average, and maximum rates, as well as enhanced security by a factor of 16. Moreover, the design maintains the energyefficient and compact features of the ECS architecture. As part of the demo, we will demonstrate a round-trip secure data transmission using ECS protocol on Artix-7 FPGA. As IoT evolves, 5G will eventually reach its limits for data rate, latency, and security, and will be unable to support most future advanced IoT applications. Therefore, there is a strong motivation for a secure, high data rate, and power-efficient transceiver architecture. This work presents a compact and power-efficient transceiver architecture that is secure and easily implemented on an FPGA. The fully digital design also makes it easily portable between technologies.

Loukmane Bouchefirat, Intern, TII. Email: Loukmane.bouchefirat@tii.ae

Takieddine Fellag, Intern, TII. Email: Takieddine.fellag@tii.ae

Ibrahim Farhat, Researcher, TII. Email: Brahim.farhat@tii.ae

Wassim Hamidouche, Acting Principal Researcher, TII. Email: Wassim.hamidouche@tii.ae

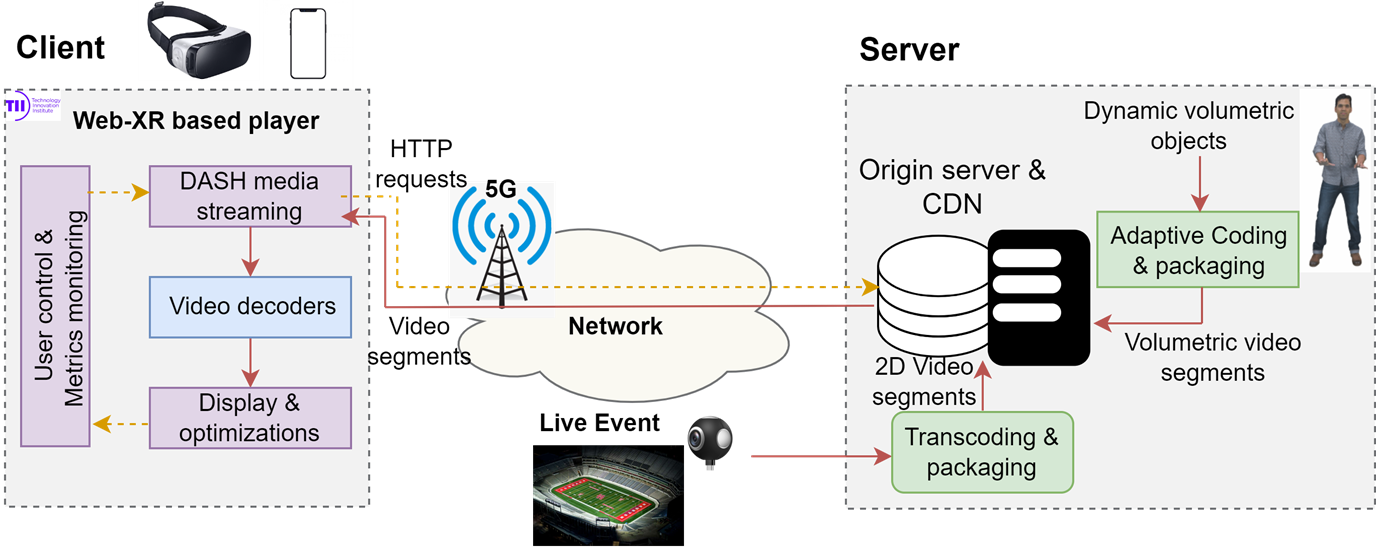

Our demonstration showcases an innovative application within the VR/AR space that addresses the challenge and demand for streaming volumetric video or point cloud video, the medium for representing natural content in VR, AR, and XR environments.

The underlying functionality of the demo is a well-crafted combination of end-to-end adaptive point cloud video streaming mechanisms. It includes encoding, transmission, decoding, and rendering processes. These elements come together to exhibit the simultaneous streaming of point cloud and 2D videos. The demo guarantees users with VR headsets an immersive experience with six Degrees Of Freedom (6DoF)—an experience that is tightly bandwidth-adaptive.

Understanding that the volumetric video is the future of video technology and a typical use case for 6G and beyond cellular communications, crucially, our demo addresses the efficient volumetric video streaming needs of low power consumption devices such as VR headsets. We achieve this without compromising the provision of a fully interactive user experience.

This demonstration is in line with the trends and advancements in the realm of 6G cellular communications and the direction in which video technology is progressing.

Diana W. Dawoud, Abigail Copiaco, Husameldin Mukhtar, Shadi Atalla, Wathiq Mansoor, College of Engineering and Information Technology, University of Dubai, Dubai, UAE. Emails: ddawoud@ud.ac.ae , hhadam@ud.ac.ae , acopiaco@ud.ac.ae ,

satalla@ud.ac.ae , wmansoor@ud.ac.ae

This work introduces a LiFi powered Autonomous Bus (LI-BUS) solution that integrates visible light positioning (VLP) technology along-side cutting-edge solutions. The aim of LI-BUS is to enhance the travel experience and confidence in self-driving buses by implementing the following services: 1) Enhancing confidence in self-driving cars with highly reliable LiFi-powered real-time tracking and notifications. In the proposed LI-BUS, the street light units can serve as LiFi transmitters, each assigned a distinct Cell-identification (ID) code, forming the basis of a robust positioning framework. By detecting the bus’s ID through the identification points on the head or tail lights, the bus’s location can be accurately computed. 2) Empowering people of determination through onboard LiFi-powered assistance for locating vacant passenger seats. In LI-BUS, VLP can provide precise centimeter-level positioning and travel direction information by designing an onboard visible light seat localization (VLSL) system, aiding visually impaired travelers in locating available seats. 3) Improving timely and accurate information gathering on bus seat occupancy to enable advanced seat booking and adaptive service provision. Passengers gain access to real-time information about seat availability, the bus’s current location and estimated arrival time at specific bus stations. Consequently, passengers can enjoy a seamless and organized boarding experience without delays. 4) Consistently enriched passenger experience by providing dedicated virtual reality (VR) content aligned with the locations visited during the bus journey, all without the need for extensive memory infrastructure. In the LI-BUS solution, the existing street lighting system, utilizing VLP technology, can be harnessed and linked to nearby city sights. By utilizing the cell ID of each street optical source (OS), the bus can access real-time immersive VR experiences tied to specific sites it passes by.

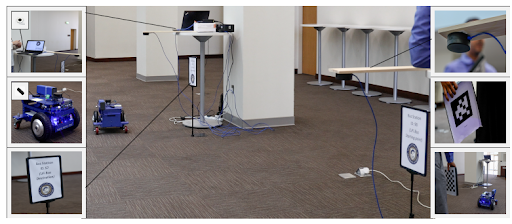

To validate the efficacy of the proposed LI-BUS, a demonstration is designed that specifically concentrates on two pivotal implementation domains: the bus command and control system, as well as the on-board seat localization assistance. Within the bus command and control demonstration, a LiFiMax Controller with a LiFiMax antenna is deployed at each bus station, while the LiFiMax endpoint, coupled with a Raspberry Pi 4 processing unit (PU), is placed atop a mobile robot. During the demonstration, the robot adeptly receives the control signal and acknowledges the final station destination through an audible announcement using a speaker. Upon arriving at the second station, the robot successfully demonstrates its ability to halt accurately at the destination and further acknowledges reaching the final station destination through an audible announcement. Within the demonstration setup for the on-board assistance seat localization, a LiFiMax antenna is affixed above each seat, the system operates such that when a successful connection is established between the LiFiMax antenna and endpoint that is placed on the seat, a message indicating” seat is empty” is promptly broadcasted. Otherwise, the system broadcasts the message” seat is full.” On the passenger side, an additional LiFiMax endpoint is installed, in conjunction with a Raspberry Pi 4 processing unit, to process the messages received from the LiFi system. During the demonstration, one of the seat’s endpoints is deliberately covered to indicate that the seat is occupied, while the other seat’s endpoint remains uncovered, signifying that the seat is vacant. While navigating between the two seats, the passenger’s endpoint effectively and accurately plays the corresponding audible message for each seat, demonstrating reliable performance without any signs of interference. The system designates the covered seat as” full,” conveying its occupancy status, while the uncovered seat is announced as” empty,” explicitly indicating its availability. Moreover, the system seamlessly updates the broadcast message promptly when there is a change in seat status, whether from empty to full or vice versa. This capability highlights the system’s low latency and ability to provide real-time updates, ensuring passengers are accurately informed about seat availability as it dynamically changes during the boarding process.

Ibrahim Khadraoui, Research Engineer, TII. Email: Ibrahim.khadraoui@tii.ae

Ibrahim Farhat, Researcher, TII. Email: Brahim.farhat@tii.ae

Anis Bara, Research Engineer, TII. Email: Anis.bara@tii.ae

Wassim Hamidouche, Principal Researcher, TII. Email: Wassim.hamidouche@tii.ae

Hicham Anouar, Lead Researcher, TII. Email: Hicham.anouar@tii.ae

Rashed Alnuaimi, Associate Researcher, TII. Email: rashed.alnuaimi@tii.ae

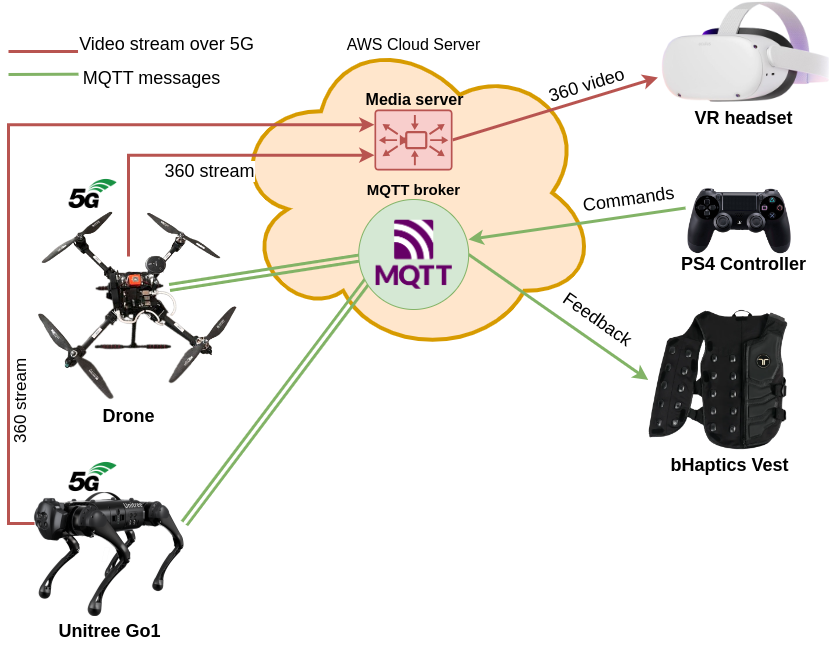

Our demo combines various state-of-the-art technologies to create a unique immersive experience using the VR-based Fleet Control system. Users can gain an unapparelled vantage point through a VR headset, enveloped in a comprehensive 360-degree camera perspective provided by multi-source immersive streams from both aerial and ground vehicles.

A combination of advanced equipment takes this immersive experience to the next level. An innovative VR treadmill provides enhanced movement control, a feature usually missing from traditional VR setups. The haptic vest takes immersion one step further by providing tactile feedback to users, embodying user engagement in the virtual environment.

The precise control over various robotic vehicles both airborne (UAV) and terrain-based (Unitree Dog robot) is achieved through the integration of a controller joystick and connected gloves, allowing users to engage in real-time control action. This active interaction is broadcasted via a specialized immersive web application which users can access with ease.

5G network capabilities are employed to substantially reduce latency issues tied to real-time interaction, trimming down time lags to sub-second levels. Consequently, not only does this system enable an immersive scenario, but it also facilitates visualization of and participation in realistic drone flight scenarios – all from the comfort of the user’s chosen geographical location.

This demonstration provides key insights into the realm of remote Fleet Management System, its potentials, challenges, and the possibilities it holds for technological innovation.

Mohammed Abduljabbar Dept. of Computer Science & Software Engineering United Arab Emirates University Al Ain, UAE. Email: 201970087@uaeu.ac.ae

Ganzorig Batnasan Dept. of Computer Science & Software Engineering United Arab Emirates University Al Ain, UAE. Email: gbatnasan@uaeu.ac.ae

Munkhjargal Gochoo Dept. of Computer Science & Software Engineering United Arab Emirates University Al Ain, UAE. Email: mgochoo@uaeu.ac.ae

Munkh Erdene Otgonbold Dept. of Computer Science & Software Engineering United Arab Emirates University Al Ain, UAE. Email: omunkuush@uaeu.ac.ae

Ahmed Alshamsi Dept. of Computer Science & Software Engineering United Arab Emirates University Al Ain, UAE. Email: 202031891@uaeu.ac.ae

Naser Alsaedi Dept. of Computer Science & Software Engineering United Arab Emirates University Al Ain, UAE. Email: 202032422@uaeu.ac.ae

Charles Vanwynsberghe TII. Email: charles.vanwynsberghe@tii.ae

Anis Bara, Technology and Innovation Institute Abu Dhabi, UAE. Email: Anis.bara@tii.ae

Jiguang He, Technology and Innovation Institute Abu Dhabi, UAE. Email: jiguang.he@tii.ae

Aymen Fakhreddine, Technology and Innovation Institute Abu Dhabi, UAE. Email: aymen.fakhreddine@tii.ae

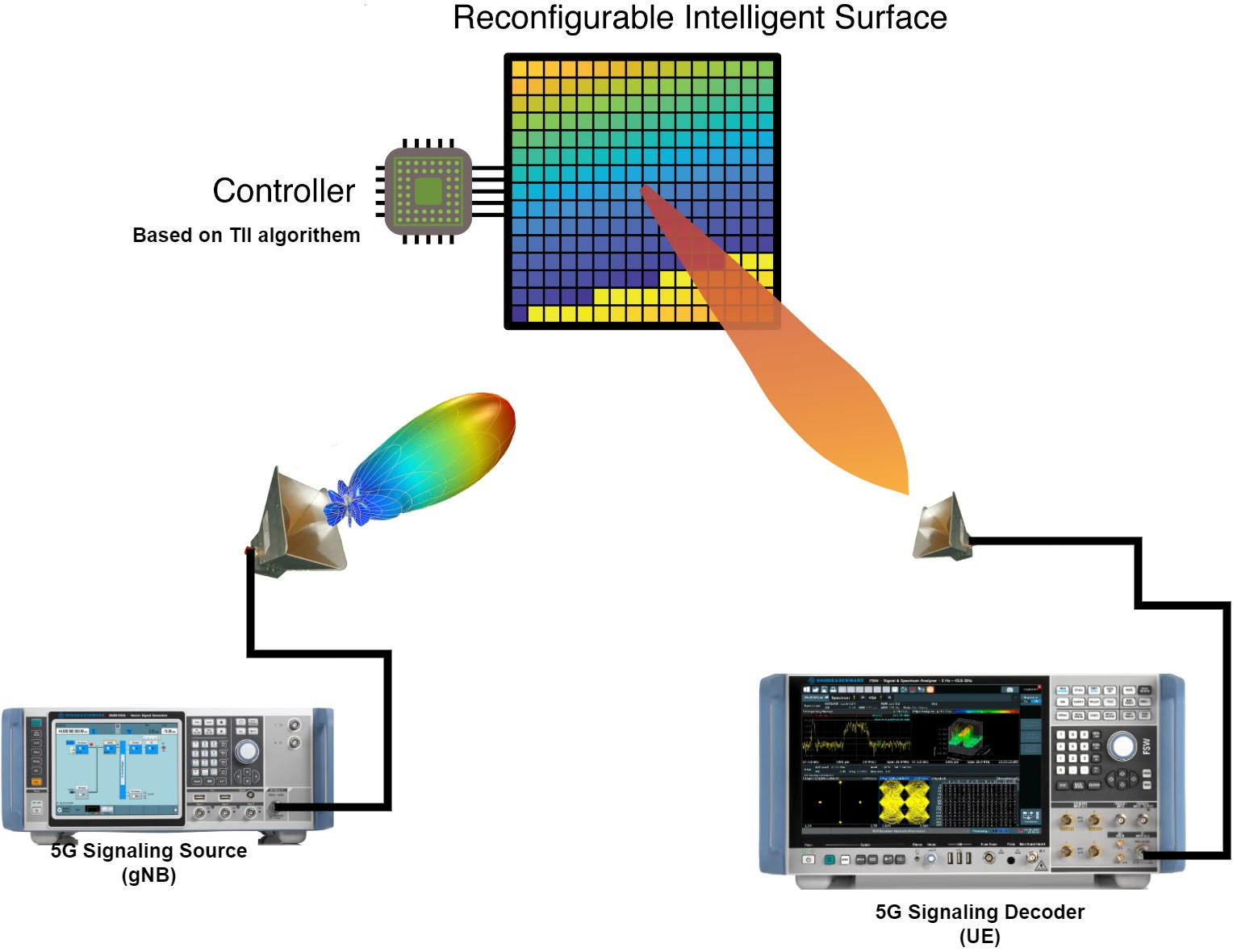

Explore the cutting-edge advancements in 5G communication with our latest demo. Dive into the intricacies of 5G signaling in the millimeter wave FR2 spectrum and witness the transformative potential of Reconfigurable Intelligent Surfaces (RIS) in optimizing signal strength, enhancing coverage, and ensuring efficient spectral usage also the combination of 5G mmWave signaling with RIS technologies promises a transformative leap in wireless communication, paving the way for real-time applications, augmented reality, and a connected world like never before

Bilel CHERIF, Technology and Innovation Institute Abu Dhabi, UAE. Emails: bilel.cherif@tii.ae

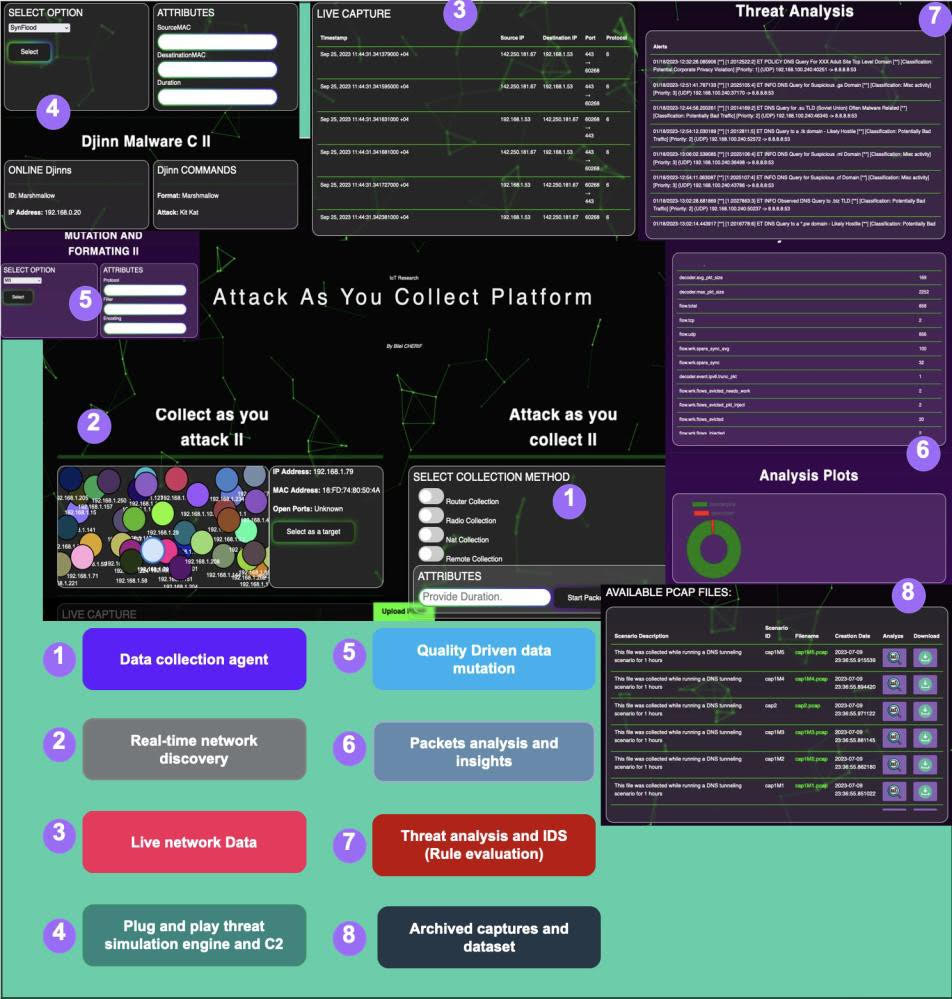

The number of connected devices is expected to grow exponentially during the upcoming era. According to [1], the latest available data shows that there are approximately 15.14 billion connected IoT devices. This figure is expected to almost double to 29.42 billion by 2030. Most IoT devices implement non standardized practices nor standardized architectures. Security is still questionable today when it comes to IoTs due to the fact that it is highly dependent on the manufacturer's design decisions. Securing IoT devices through classic standard practices is very limited, as it does not map well to this context.

This demo will highlight novel modeling and interaction technologies that have been implemented in the Attack As You Collect Platform to enable characterizing IoT device behavior, threat simulation, and analysis in a contextual-based security fashion. The proposed modeling pushes the boundaries toward a network-agnostic approach that could be applied independently from the underlaying networking technology (Cellular Network, WiFi, BLE, Zigbee). The platform ships a tiny LLM model (FalCopilot) that assists the users in summarizing the data context and exploring the different pentesting sessions. The showcased copilot feature is an early PoC integration that will highlight the advantage that AI assistance could provide for the mentioned tasks.

During the demo, the platform will be used to trigger devices, monitor their behavior, and perform various threat analyses and augmentations on a set of specific scenarios. The demo will be applied to a home IoT network that includes a WiFi-enabled router connected to a 5G network.

The main scientific contributions of our work are: